Cognition involves the flow of information through sensory, working, and long-term memory banks in the brain. Sensory memory temporarily holds raw data, working memory manipulates and organizes information, and long-term memory stores it indefinitely by creating strategic electrical wiring between neurons. Learning amounts to increasing the quantity, depth, retrievability, and generalizability of concepts and skills in a student’s long-term memory. Limited working memory capacity creates a bottleneck in the transfer of information into long-term memory, but cognitive learning strategies can be used to mitigate the effects of this bottleneck.

This post is part of the book The Math Academy Way (Working Draft, Jan 2024). Suggested citation: Skycak, J., advised by Roberts, J. (2024). Cognitive Science of Learning: How the Brain Works. In The Math Academy Way (Working Draft, Jan 2024). https://justinmath.com/cognitive-science-of-learning-how-the-brain-works/

Want to get notified about new posts? Join the mailing list and follow on X/Twitter.

At the most fundamental level, learning is the creation of strategic electrical wiring between neurons (“brain cells”) that improves the brain’s ability to perform a task.

When the brain thinks about objects, concepts, associations, etc, it represents these things by activating different patterns of neurons with electrical impulses. Whenever a neuron is activated with electrical impulses, the impulses naturally travel through its outward connections to reach other neurons, potentially causing those other neurons to activate as well. By creating strategic connections between neurons, the brain can more easily, quickly, accurately, and reliably activate more intricate patterns of neurons.

As one might expect, it is extraordinarily complicated to understand what these specific brain patterns are, how they interact, and how the brain identifies strategic ways to improve its connectivity. However, to some extent, these are just nature’s way of implementing cognition – and the overarching cognitive processes of the brain are much better understood.

Sensory, Working, and Long-Term Memory

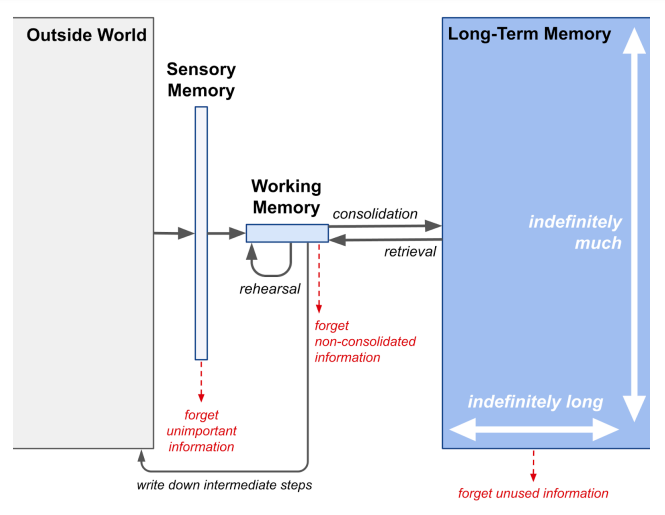

At a high level, human cognition is characterized by the flow of information across three memory banks:

- Sensory memory temporarily holds a large amount of raw data observed through the senses (sight, hearing, taste, smell, and touch), only for several seconds at most, while relevant data is transferred to short-term memory for more sophisticated processing.

- Short-term memory, and more generally, working memory, has a much lower capacity than sensory memory, but it can store the information about ten times longer. Working memory consists of short-term memory along with capabilities for organizing, manipulating, and generally “working” with the information stored in short-term memory. The brain’s working memory capacity represents the degree to which it can focus activation on relevant neural patterns and persistently maintain their simultaneous activation, a process known as rehearsal.

- Long-term memory effortlessly holds indefinitely many facts, experiences, concepts, and procedures, for indefinitely long, in the form of strategic electrical wiring between neurons. Wiring induces a “domino effect” by which entire patterns of neurons are automatically activated as a result of initially activating a much smaller number of neurons in the pattern. The process of storing new information in long-term memory is known as consolidation. At a cognitive level, learning can be described as a positive change in long-term memory.

These memory banks work together to form the following pipeline for processing information:

- Sensory memory receives a stimulus from the environment and passes on important details to working memory.

- Working memory holds and manipulates those details, often augmenting or substituting them with related information that was previously stored in long-term memory.

- Long-term memory curates important information as though it were writing a “reference book” for the working memory.

Note, however, that there is a crucial conceptual difference between long-term memory and a reference textbook: long-term memory can be forgotten. The text in a reference book remains there forever, accessible as always, regardless of whether you read it – but the representations in long-term memory gradually, over time, become harder to retrieve if they are not used, resulting in forgetting. The phenomenon of forgetting in long-term memory has been widely researched and can be characterized as follows (Hardt, Nader, & Nadel, 2013):

- ”…[F]orgetting refers to the absence of expression of previously properly acquired memory in a situation that normally would cause such expression. This can reflect actual memory loss or a failure to retrieve existing memory.”

However, the lower-level mechanisms underlying forgetting in long-term memory are not yet well understood.

There are two complementary perspectives by which we can think about this pipeline.

- encoding perspective — the pipeline converts or “encodes” information from the outside world into a representation that can be stored in long-term memory and later recalled.

- executive function (or cognitive control) perspective — the pipeline is centered around working memory, which pulls relevant information from sensory and long-term memory into an area where it can be combined, transformed, and used to guide behavior to achieve goals.

In the context of mathematical talent development, once a student is beyond the stage of learning how to read and count, we are less concerned with their sensory memory and more concerned with their long-term memory. The goal of instruction is to increase the quantity, depth, retrievability, and generalizability of mathematical concepts and skills in the student’s long-term memory.

The student’s working memory capacity is a bottleneck in the transfer of information into their long-term memory. However, by leveraging cognitive learning strategies and properly scaffolding and adapting instruction to the student’s individual needs, we can minimize the degree to which their working memory capacity limits their learning, thereby maximizing the transfer of new information and the retention of existing information in long-term memory.

Design Constraints

The brain’s information-processing pipeline is designed to be incredibly efficient. However, even the most efficient designs have limitations. Design is all about balancing trade-offs to achieve the best possible outcome in the face of constraints. To understand the constraints and the rationale behind a design, it can be helpful to attempt some naive critiques.

Critique: Why is long-term memory needed? Why can’t the brain just hold everything in working memory forever through rehearsal?

Rationale: Rehearsal requires a lot of effort. It is very taxing on the brain. When the brain engages in rehearsal, it’s like a muscle that is lifting a weight.

Just like a muscle has a limit to the amount of weight it can hold, the brain has a limit to the amount of new information it can hold in working memory via rehearsal. Most people can only hold about 7 digits (or more generally 4 chunks of coherently grouped items) simultaneously and only for about 20 seconds (Miller, 1956; Cowan, 2001; Brown, 1958). And that assumes they aren’t needing to perform any mental manipulation of those items – if they do, then fewer items can be held due to competition for limited processing resources (Wright, 1981).

Long-term memory solves this problem by providing a place where the brain can store lots of information for a long time without requiring much effort.

Critique: Why doesn’t the brain just store everything it encounters in long-term memory? That way, it would never forget anything.

Rationale: When it comes to information storage, more is not always better. In order for it to be worthwhile to store a piece of information, the benefit must offset the cost. Creating connections between neurons is costly in the sense that it requires biological resources – the connections are physical growths between cells, which means they have to be actively constructed and maintained by the body.

To illustrate with a concrete example, suppose that you want to buy a biography book that will help you understand somebody’s background and their impact on society. One book contains 300 pages, costs $20, and covers formative experiences in their childhood, their career arc, and occasional anecdotes to illustrate key points and themes. Another book contains 10,000 pages, costs $1,000, covers all of the information in the first book, and also includes a description of every single meal the person ate throughout their life. Unless you have a specific, intense interest in this person’s dietary habits (which you probably don’t), it’s easy to see that the first option is superior.

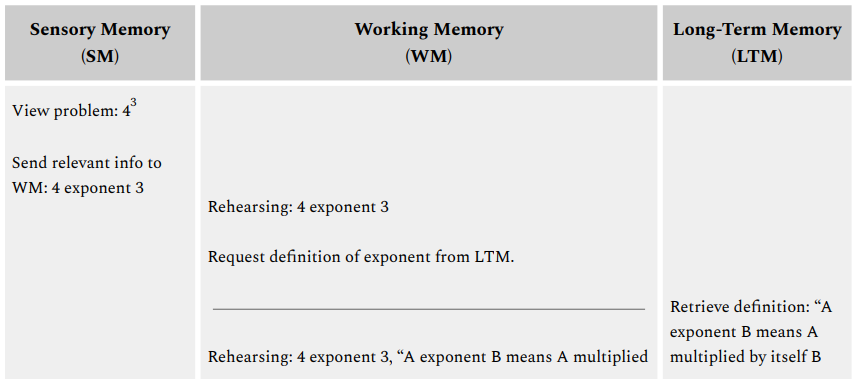

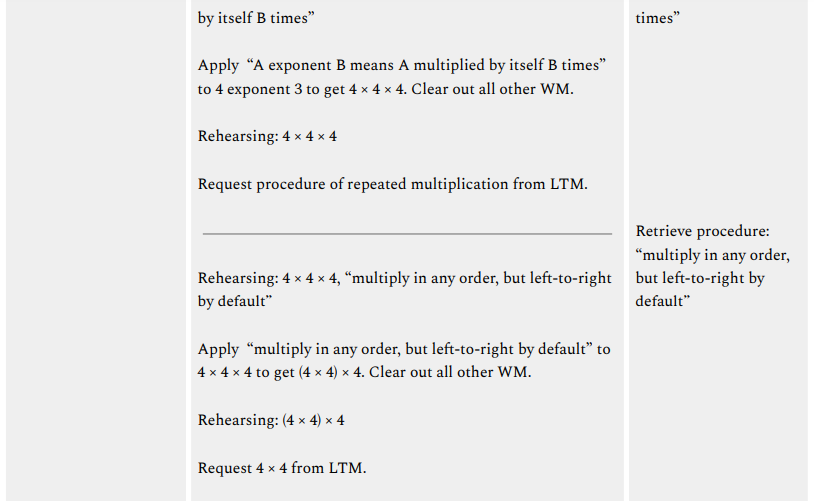

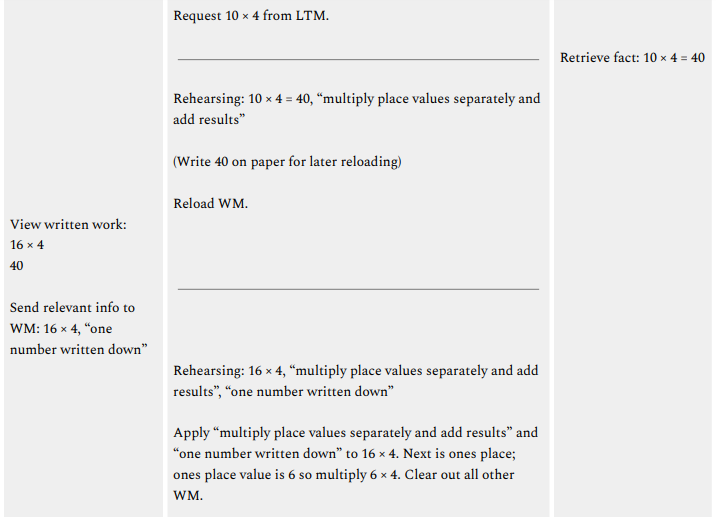

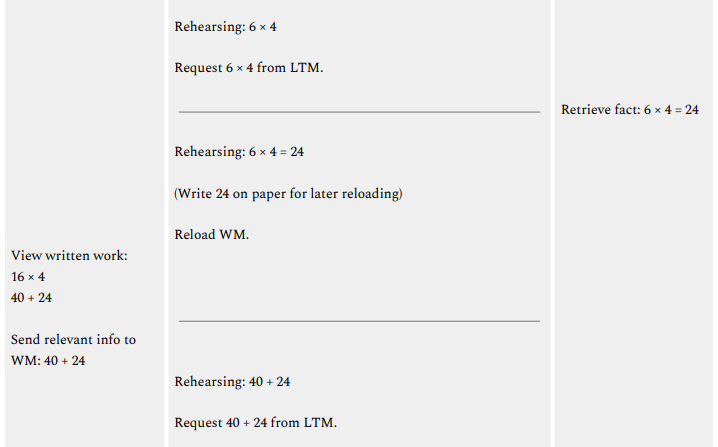

Case Study: Information Flow During a Computation

To illustrate how information flows through these memory banks when solving a math problem, let’s analyze what happens as we compute $4^3$ using typical arithmetic strategies while writing down some intermediate steps. (Remember that exponentiation is just repeated multiplication: $4^3$ means to take three 4’s and multiply them together, that is, $4^3$ = 4 × 4 × 4 = 64.)

First, let’s get a sense of how each memory bank will help us solve the problem:

- Sensory memory will capture visual data that lets us read the problem or any intermediate work that we’ve written down, thereby allowing the written information to be loaded into working memory. It will also filter out any distractions (e.g. background noise) as we solve the problem.

- Working memory will hold the relevant pieces of the problem, request additional information from long-term memory, and apply that information to incrementally transform the pieces of the problem into the solution. Our problem-solving narrative will take place within the working memory.

- Long-term memory will, upon request from working memory, produce definitions, facts, and procedures that we learned previously. It is like an internal “reference book” that we can use to look up additional information that would be helpful while solving the current problem.

It’s worth re-emphasizing that the problem-solving narrative will take place within the working memory. Sensory and long-term memory will supply working memory with information, which working memory will combine, transform, and use to guide our behavior to solve the problem. As researchers elaborate (Roth & Courtney, 2007):

- “Working memory (WM) is the active maintenance of currently relevant information so that it is available for use. A crucial component of WM is the ability to update the contents when new information becomes more relevant than previously maintained information. New information can come from different sources, including from sensory stimuli (SS) or from long-term memory (LTM).

…

In order for information in working memory to guide behavior optimally… it must reflect the most relevant information according to the current context and goals. Since the context and the goals change frequently it is necessary to update the contents of WM selectively with the most relevant information while protecting the current contents of WM from interference by irrelevant information.

…

There are… many ways in which WM can be changed, including through the manipulation of information being maintained (Cohen et al., 1997; D’Esposito, Postle, Ballard and Lease, 1999), the addition or removal of items being maintained (Andres, Van der Linden and Parmentier, 2004), or the replacement of one item with another (Roth, Serences, and Courtney, 2006).”

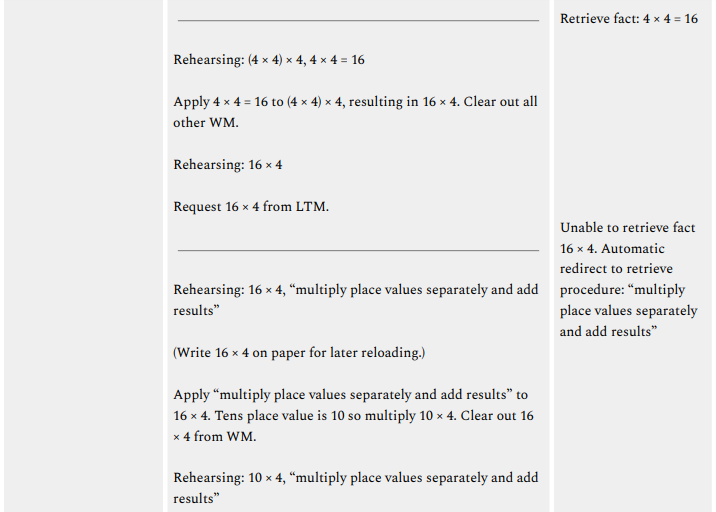

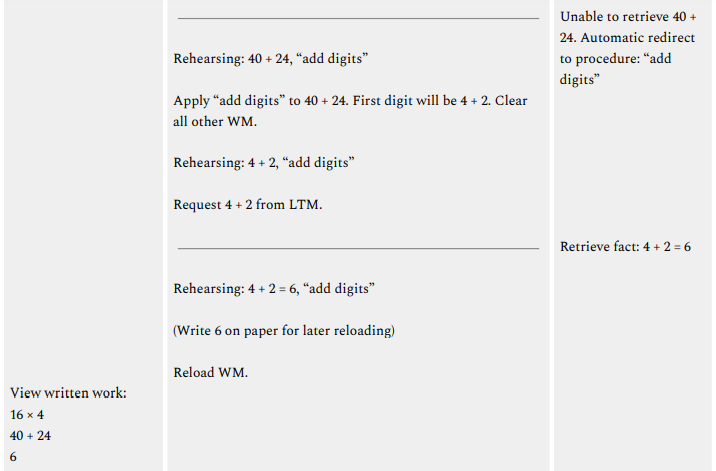

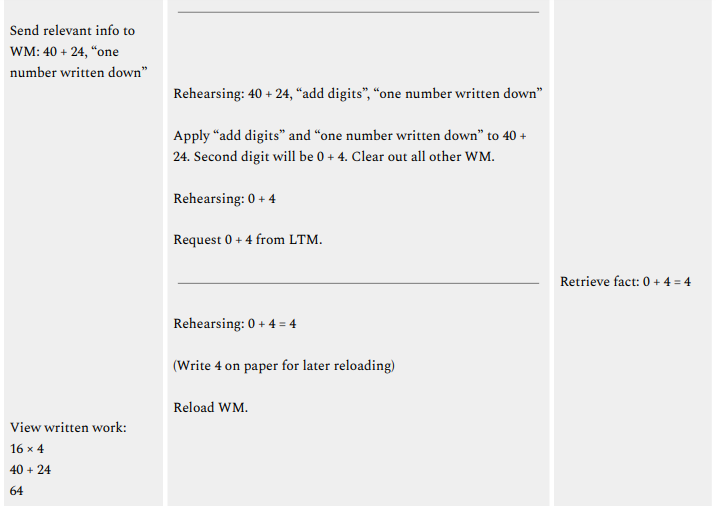

Now, let’s walk through the specific steps needed to solve the problem while observing what happens in each memory bank.

What learning resulted from this computation? Remember that learning occurs when the wiring of long-term memory is changed in a positive way that increases a student’s ability to perform a task. This can involve any combination of wiring up new information, wiring up connections between existing pieces of information, reorganizing existing wiring so that the information can be retrieved more efficiently, etc.

With this in mind, let’s take inventory of the processes that occurred within long-term memory in the example above:

-

Retrieval of definitions:

- “A exponent B means A multiplied by itself B times”

-

Retrieval of facts:

- 4 × 4 = 16

- 10 × 4 = 40

- 6 × 4 = 24

- 4 + 2 = 6

- 0 + 4 = 4

- 4 × 4 = 16

-

Redirects to procedures (and retrieval/execution of those procedures):

- 4 × 4 × 4 $\to$ “multiply in any order, but left-to-right by default”

- 16 × 4 $\to$ “multiply place values separately and add results”

- 40 + 24 $\to$ “add digits”

- 4 × 4 × 4 $\to$ “multiply in any order, but left-to-right by default”

All of these pieces of information will become further consolidated in long-term memory, and there will be additional wiring connecting these component skills as part of a larger procedure for computing exponents.

Additionally, the fact $4^3 = 64$ will also begin consolidating in long-term memory (though it will soon be forgotten unless it is repeatedly reviewed into the future). Indeed, many people who frequently perform mental math with exponents know their cubes from $1^3$ to $6^3$ by heart and can simply retrieve their values as opposed to computing them.

Neuroscience of Working Memory

Recall that when the brain thinks about objects, concepts, associations, etc, it represents these things by activating different patterns of neurons with electrical impulses. Loosely speaking, the brain’s working memory capacity represents the degree to which it can focus activation on relevant neural patterns and persistently maintain their simultaneous activation. The cognitive load of a task represents the level of exertion that the brain would experience while completing the task.

(More strictly speaking: higher working memory capacity refers not to the ability to sustain more neural activity in the energy sense, but rather, the ability to sustain relevant neural activity while suppressing interference from irrelevant neural activity. At a biological level, hitting a working memory capacity limit does not entail exhausting one’s ability to maintain more neural activity, but rather exhausting one’s ability to maintain focus and attention, that is, appropriate concentration or allocation of one’s neural activity.)

As summarized by D’Esposito (2007):

-

*”…[T]he neuroscientific data presented in this paper are consistent with most or all neural populations being able to retain information that can be accessed and kept active over several seconds, via persistent neural activity in the service of goal-directed behaviour.

The observed persistent neural activity during delay tasks may reflect active rehearsal mechanisms. Active rehearsal is hypothesized to consist of the repetitive selection of relevant representations or recurrent direction of attention to those items.

…

Research thus far suggests that working memory can be viewed as neither a unitary nor a dedicated system. A network of brain regions, including the PFC [prefrontal cortex], is critical for the active maintenance of internal representations that are necessary for goal-directed behaviour. Thus, working memory is not localized to a single brain region but probably is an emergent property of the functional interactions between the PFC and the rest of the brain.”*

Long-term learning is represented by the creation of strategic electrical wiring between neurons. Whenever a neuron is activated with electrical impulses, the impulses naturally travel through its outward connections to reach other neurons, potentially causing those other neurons to activate as well. By creating strategic connections between neurons, the brain can more easily, quickly, accurately, and reliably activate more intricate patterns of neurons.

Talcott (2021) summarizes this process as follows:

-

*“Individual neurons can be thought of as rather simple biological batteries, each maintaining a gradient of biochemical ions across its cell membrane, which results in a small, local electrical charge — or potential.

…

Incoming signals from neighbouring brain cells are communicated to the neuron’s dendrites and act to continuously modify the magnitude of the neuron’s electrical charge. When the sum of signals from other neurons drives the electrical gradient to and then past a critical voltage, an electrical signal — the action potential — is generated and propagated along the neuron’s axon. This signal ultimately modulates the activity of other neurons to which it connects.This process of synaptic transmission comprises the release of neurotransmitter chemicals at the junction between two cells — the synapse. Neurotransmitters released in response to the action potential in the pre-synaptic cell bind to receptors on the dendrites of the post-synaptic cell. The effects of such neurotransmitter binding serve to modify the electrical potential in the cell, either exciting it toward generating an action potential or inhibiting it from doing so.

…

Cognition — our thinking, reasoning and learning processes — are derived from activity in neural networks within the brain… Neurodevelopment is a lifelong process involving the modification of the structural and functional properties of the brain… One of the most striking aspects of the post-natal neurodevelopmental period in early childhood is in this near continuous refinement of neural connectivity, including both the strengthening of productive synapses and elimination of those that are less robust or redundant…Structural and functional connectivity provides mechanisms for implementing adaptation of the brain in response to an individual’s experience of the world. As children are born with nearly a full complement of brain cells, adaptation of responses to environmental change — the underlying basis of learning for any organism — is accomplished mainly through modifying neural connectivity. Connectivity increases in parallel with children’s advancement of their cognitive capacities and learning achievement.

Adapted from a theory first articulated by Donald Hebb in the 1940, one well-supported principle regarding the relationships between brain structure and function in the developing brain is ‘what fires together, wires together’… When reinforced through repetition (experience), this coupling increases the probability of their activity being coincident in the future. This feedback process also works in reverse, such that connections that are not actively reinforced can be eliminated through a competitive elimination process, which favours the survival of more functionally adaptive networks at the expense of less efficient or redundant competitor networks through development…

These mechanisms of synaptic plasticity are widely considered to be a predominant way through which information is coded and retained in brain networks… Learning and memory (a cognitive demonstration of learning through recall of material to which an individual has been exposed) are therefore both expressed in the brain and related at the neural level to modification of connectivity within neural networks in response to repeated patterns of environmental stimuli and their associations.”*

Wiring induces a “domino effect” by which entire patterns of neurons are automatically activated as a result of initially activating a much smaller number of neurons in the pattern. However, when the brain is initially learning something, the corresponding neural pattern has not been “wired up” yet, which means that the brain has to devote effort to activating each neuron in the pattern. In other words, because the dominos have not been set up yet, each one has to be toppled in a separate stroke of effort. This imposes severe limitations on how much new information the brain can hold simultaneously in working memory.

References

Brown, J. (1958). Some tests of the decay theory of immediate memory. Quarterly journal of experimental psychology, 10(1), 12-21.

Cowan, N. (2001). The magical number 4 in short-term memory: A reconsideration of mental storage capacity. Behavioral and brain sciences, 24(1), 87-114.

D’Esposito, M. (2007). From cognitive to neural models of working memory. Philosophical Transactions of the Royal Society B: Biological Sciences, 362 (1481), 761-772.

Hardt, O., Nader, K., & Nadel, L. (2013). Decay happens: the role of active forgetting in memory. Trends in cognitive sciences, 17(3), 111-120.

Miller, G. A. (1956). The magical number seven, plus or minus two: Some limits on our capacity for processing information. Psychological review, 63 (2), 81.

Roth, J. K., & Courtney, S. M. (2007). Neural system for updating object working memory from different sources: sensory stimuli or long-term memory. Neuroimage, 38 (3), 617-630.

Talcott, J. B. (2021). The neurodevelopmental underpinnings of children’s learning: Connectivity is key.

Wright, R. E. (1981). Aging, divided attention, and processing capacity. Journal of Gerontology, 36 (5), 605-614.

This post is part of the book The Math Academy Way (Working Draft, Jan 2024). Suggested citation: Skycak, J., advised by Roberts, J. (2024). Cognitive Science of Learning: How the Brain Works. In The Math Academy Way (Working Draft, Jan 2024). https://justinmath.com/cognitive-science-of-learning-how-the-brain-works/

Want to get notified about new posts? Join the mailing list and follow on X/Twitter.