I’m using an LLM to learn biology. My overall conclusion is that IF you could learn successfully, long-term, by self-studying textbooks on your own, and the only thing keeping you from learning a new subject is a slight lack of time, THEN you can probably use LLM prompting to speed up that process a bit, which can help you pull the trigger on learning some stuff you previously didn’t have time for. BUT the vast, vast majority of people are going to need a full-fledged learning system. And even for that miniscule portion of people for whom the “IF” applies… whatever the efficiency gain of LLM prompting over standard textbooks, there’s an even bigger efficiency gain of full-fledged learning system over LLM prompting.

Want to get notified about new posts? Join the mailing list and follow on X/Twitter.

On a recent podcast, Zander asked how I’d go about learning an arbitrary new subject.

I get this question a lot but it’s kind of a hard question so I hadn’t really attempted to answer it until now.

I focused on the idea that – even if you have a good understanding of how learning happens and what kinds of practice techniques are effective – the hard part of the problem, that’s less in your control, is finding a high-quality curriculum.

Ideally an adaptive learning system that is both rigorous and comprehensive – and in most subjects that doesn’t seem exist yet. Yes, there’s Math Academy… but what if you want to learn, say, biology? Where do you go?

I actually looked into this last year because I want to shore up my own biology knowledge (so I can have more in-depth conversations with my wife, who’s a graduate student in immunology / bioinformatics). And I couldn’t really find anything besides various textbooks and lecture-based courses.

Now don’t get me wrong, it’s possible to teach yourself from textbooks – in fact, that’s how I learned most of the math I know – but man is it brutally inefficient compared to a good adaptive learning system. And that inefficiency is really what’s prevented me from pulling the trigger on learning biology.

So on the podcast we got to talking about the idea of trying an LLM to emulate an adaptive learning system.

The idea being that – while the emulated system is probably not going to be as good as a system built by a company whose sole job is to build that system – maybe if you prompt it with a lot of background knowledge on how efficient learning works, and correct erroneous pedagogical decisions whenever needed, maybe you can end up with a makeshift learning system that’s at least more efficient than standard textbooks.

Efficient enough to actually get me to pull the trigger on learning biology.

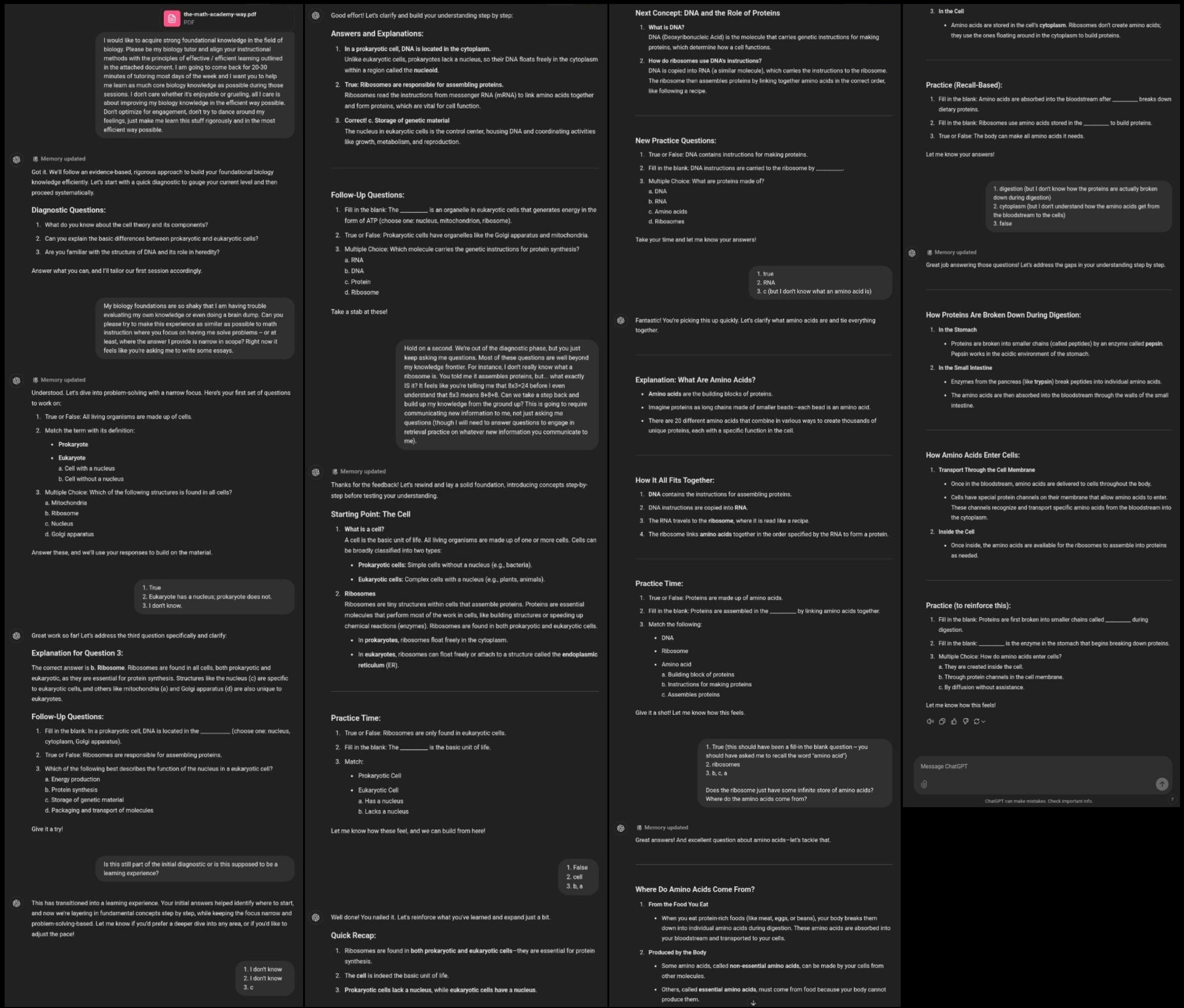

Session #1

I threw GPT-4o a PDF of the Math Academy Way along with the following prompt:

- “I would like to acquire strong foundational knowledge in the field of biology. Please be my biology tutor and align your instructional methods with the principles of effective / efficient learning outlined in the attached document. I am going to come back for 20-30 minutes of tutoring most days of the week and I want you to help me learn as much core biology knowledge as possible during those sessions. I don’t care whether it’s enjoyable or grueling, all I care is about improving my biology knowledge in the efficient way possible. Don’t optimize for engagement, don’t try to dance around my feelings, just make me learn this stuff rigorously and in the most efficient way possible.”

The results were actually pretty good. Not amazing, but good enough that I felt like the learning experience was efficient enough to get me to pull the trigger on learning biology for 20-30 minutes most days of the week.

(Zander recommended that I use Claude, and I know that’s the going advice here on X/Twitter, but Claude said the Math Academy Way pdf was 6x too big and asked me to split it into 6 separate files, so I just said screw it I’m using ChatGPT. May switch over to try out Claude once I build up my biology learning habit a bit.)

Even after providing the main prompt along with the Math Academy Way pdf, I still had to correct ChatGPT on some pedagogical issues and get it on the rails before it started working well enough for me to start actually learning.

The first challenge was that, when it started out asking me some diagnostic questions, it was asking me things that seemed like essay prompts. Here’s the corrective feedback I gave:

- “My biology foundations are so shaky that I am having trouble evaluating my own knowledge or even doing a brain dump. Can you please try to make this experience as similar as possible to math instruction where you focus on having me solve problems — or at least, where the answer I provide is narrow in scope? Right now it feels like you’re asking me to write some essays.”

That seemed to fix the question type issue well enough to move on, but the next issue was that even after it said it was done with the diagnostic phase, it just kept asking me questions without presenting any new material to learn from. I had to explicitly ask it to provide me with instructional content:

- “Hold on a second. We’re out of the diagnostic phase, but you just keep asking me questions. Most of these questions are well beyond my knowledge frontier. For instance, I don’t really know what a ribosome is. You told me it assembles proteins, but… what exactly IS it? It feels like you’re telling me that 8x3=24 before I even understand that 8x3 means 8+8+8. Can we take a step back and build up my knowledge from the ground up? This is going to require communicating new information to me, not just asking me questions (though I will need to answer questions to engage in retrieval practice on whatever new information you communicate to me).”

At this point, it started providing brief instructional material followed by questions, and things started feeling right.

There was a minor snag with true/false questions that should have been fill-in-the-blank for retrieval practice, which I fixed as follows:

-

*“ChatGPT: True or False: Proteins are made up of amino acids.

Me: True (this should have been a fill-in the blank question — you should have asked me to recall the phrase ‘amino acid’)”*

And then going forward I also included supplemental feedback in my fill-in-the-blank response if I thought I was missing additional prerequisite knowledge:

-

*“ChatGPT: Fill in the blank: Ribosomes use amino acids stored in the __________ to build proteins.

Me: cytoplasm (but I don’t understand how the amino acids get from the bloodstream to the cells)”*

That seemed to work pretty well.

In the full session of about 25-30 minutes, I answered 18 questions. I probably spent about 10 of those minutes providing prompts and corrective feedback to keep ChatGPT on the rails pedagogically, but I’m anticipating there will be less of that in the future. So, I’m expecting to get through about 1 question per minute in the future… not bad!

I copied the entire conversation into a text file so that next time, I can supply that file (and the Math Academy Way) and ask it to pick up where it left off.

- I’m also putting dates in the text file so that I can ask it to give me whatever spaced review it thinks I’m due for (I don’t have super high expectations for that, but hopefully it’ll at least be “decent,” at least, significantly better than a standard textbook).

- And soon I’ll also ask it to quiz me on all the material I’ve learned so far.

- I’m also going to inquire about the overall roadmap, see if it can tell me more about the sequence of stages in my upcoming biology learning journey (ideally with some kind of table of contents for each stage).

Overall, I would say the experience feels significantly more efficient than learning from a textbook – definitely not as efficient as a true adaptive learning system, but efficient enough to get me over the hump of starting to shore up my biology foundations.

That said – so far, this is all material that I was at one point familiar with (at least enough to pass an intro-level university biology course), so it remains to be seen how well things work out when I move from refreshing forgotten knowledge to learning completely new material. Learning completely new material is the true test of pedagogy; refreshing forgotten knowledge is more robust to pedagogical defects.

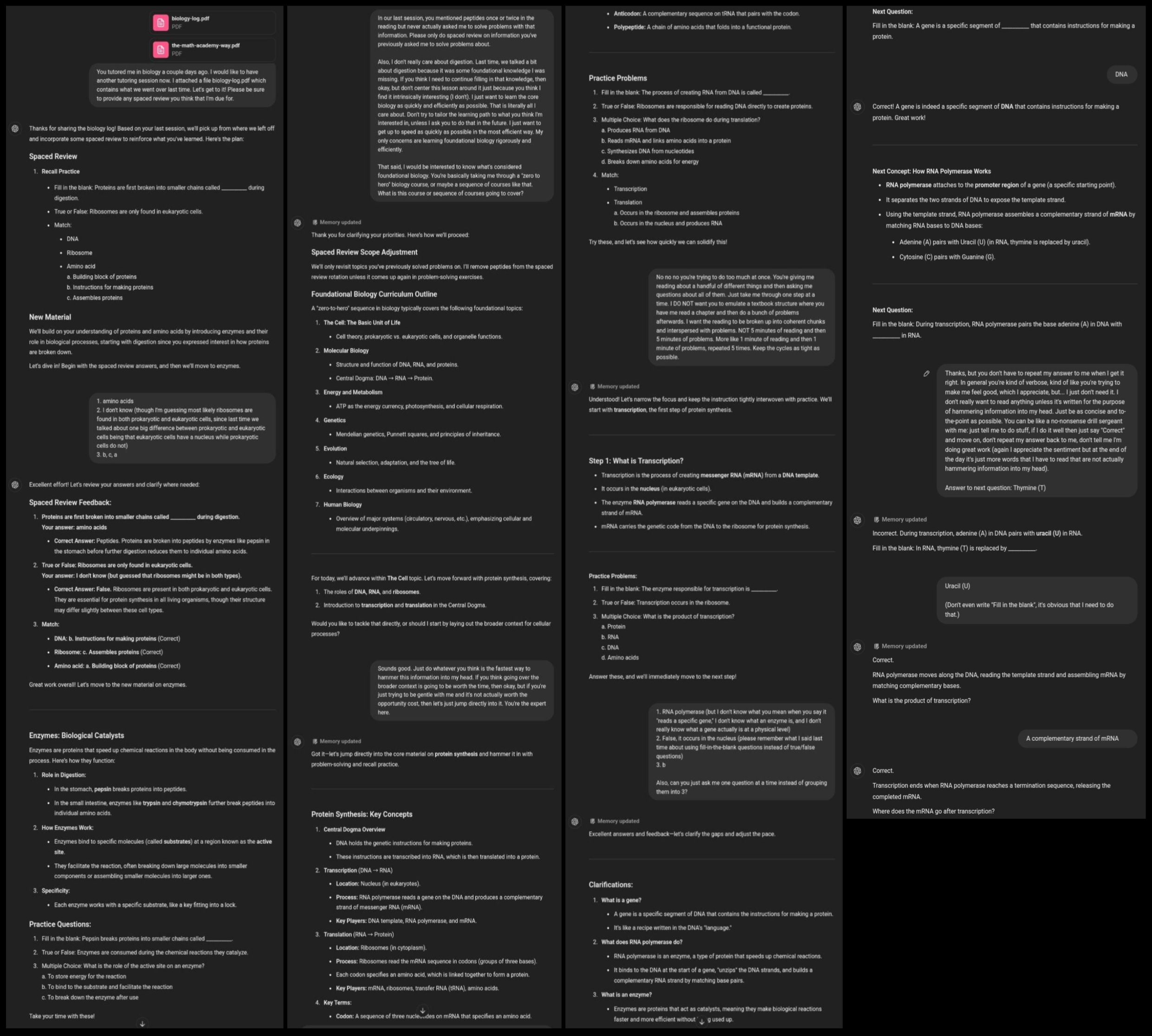

Session #2

Threw it the MA Way again, as well as a transcript of the previous session, and asked it to provide another session including some spaced review as well.

Things got off to a rocky start:

- it tried to give me spaced review on something it never actually tested me on, and

- it started down an inefficient learning path in an attempt to tailor to the session to my (incorrectly) perceived interests.

I corrected these issues with the following prompt:

-

*“In our last session, you mentioned peptides once or twice in the reading but never actually asked me to solve problems with that information. Please only do spaced review on information you’ve previously asked me to solve problems about.

Also, I don’t really care about digestion. Last time, we talked a bit about digestion because it was some foundational knowledge I was missing. If you think I need to continue filling in that knowledge, then okay, but don’t center this lesson around it just because you think I find it intrinsically interesting (I don’t). I just want to learn the core biology as quickly and efficiently as possible. That is literally all I care about. Don’t try to tailor the learning path to what you think I’m interested in, unless I ask you to do that in the future. I just want to get up to speed as quickly as possible in the most efficient way. My only concerns are learning foundational biology rigorously and efficiently.”*

It then started asking me how I would like to go about the session, jumping directly into the weeds on new content or starting with a high-level overview. Which on the surface might sound like a nice question – but remember, I’m a novice and I just want to learn this stuff as efficiently as possible. I shouldn’t be making these pedagogical decisions – that’s the whole point of the tutor. I conveyed this with the following prompt:

- “Just do whatever you think is the fastest way to hammer this information into my head. If you think going over the broader context is going to be worth the time, then okay, but if you’re just trying to be gentle with me and it’s not actually worth the opportunity cost, then let’s just jump directly into it. You’re the expert here.”

After that, there was another pedagogical issue to correct:

- “No no no you’re trying to do too much at once. You’re giving me reading about a handful of different things and then asking me questions about all of them. Just take me through one step at a time. I DO NOT want you to emulate a textbook structure where you have me read a chapter and then do a bunch of problems afterwards. I want the reading to be broken up into coherent chunks and interspersed with problems. NOT 5 minutes of reading and then 5 minutes of problems. More like 1 minute of reading and then 1 minute of problems, repeated 5 times. Keep the cycles as tight as possible.”

And then I came across another knowledge gap that I had to explicitly ask it to backfill for me, and I had to remind it about a pedagogical issue I corrected last time:

-

*“1. RNA polymerase (but I don’t know what you mean when you say it “reads a specific gene,” I don’t know what an enzyme is, and I don’t really know what a gene actually is at a physical level)

2. False, it occurs in the nucleus (please remember what I said last time about using fill-in-the-blank questions instead of true/false questions)

3. b

Also, can you just ask me one question at a time instead of grouping them into 3?”*

I also started getting fed up with its verbosity, which I fixed as follows:

-

*“Thanks, but you don’t have to repeat my answer to me when I get it right. In general you’re kind of verbose, kind of like you’re trying to make me feel good, which I appreciate, but… I just don’t need it. I don’t really want to read anything unless it’s written for the purpose of hammering information into my head. Just be as concise and to-the-point as possible. You can be like a no-nonsense drill sergeant with me: just tell me to do stuff, if I do it well then just say ‘Correct’ and move on, don’t repeat my answer back to me, don’t tell me I’m doing great work (again I appreciate the sentiment but at the end of the day it’s just more words that I have to read that are not actually hammering information into my head).

Answer to next question: Thymine (T)”*

Overall, I didn’t make a whole lot of progress today. I spent most of the time trying to get ChatGPT on the rails.

To improve the situation for next time, what I’m going to do is compile all of the feedback I’ve given ChatGPT and put that directly in the main prompt. All this stuff will of course be in the tutoring log PDF, but I probably need to make the really important feedback front-and-center.

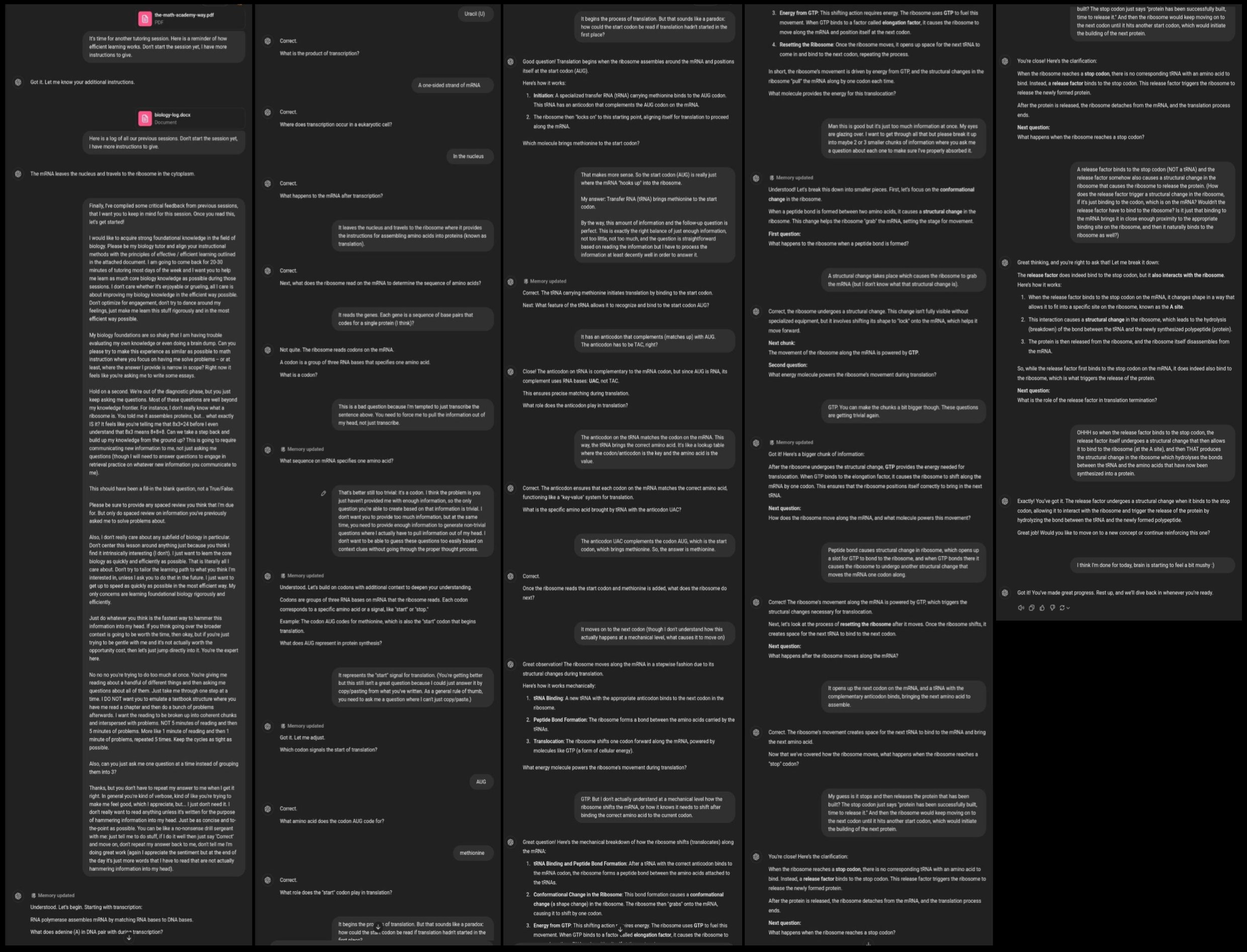

Session #3

Massive improvements in round 3! Finally settled into a rhythm where the process will work for me long-term.

The game-changing trick was – in addition to feeding it the MA Way and then a log of previous sessions – also reminding it of the particular critical feedback I had given it in previous sessions. (There was a bit more fine-tuning today but it was quick and minor.)

Granted, the efficiency is nowhere near MA-level, and even then it requires an experienced driver behind the wheel… but man is this more efficient than any other biology learning resource I’ve found. The friction has been reduced enough that learning biology finally feels realistic.

Here’s a dump of all the critical feedback that I’ve given it so far:

-

*“I would like to acquire strong foundational knowledge in the field of biology. Please be my biology tutor and align your instructional methods with the principles of effective / efficient learning outlined in the attached document. I am going to come back for 20-30 minutes of tutoring most days of the week and I want you to help me learn as much core biology knowledge as possible during those sessions. I don’t care whether it’s enjoyable or grueling, all I care is about improving my biology knowledge in the efficient way possible. Don’t optimize for engagement, don’t try to dance around my feelings, just make me learn this stuff rigorously and in the most efficient way possible.

My biology foundations are so shaky that I am having trouble evaluating my own knowledge or even doing a brain dump. Can you please try to make this experience as similar as possible to math instruction where you focus on having me solve problems — or at least, where the answer I provide is narrow in scope? Right now it feels like you’re asking me to write some essays.

Hold on a second. We’re out of the diagnostic phase, but you just keep asking me questions. Most of these questions are well beyond my knowledge frontier. For instance, I don’t really know what a ribosome is. You told me it assembles proteins, but… what exactly IS it? It feels like you’re telling me that 8x3=24 before I even understand that 8x3 means 8+8+8. Can we take a step back and build up my knowledge from the ground up? This is going to require communicating new information to me, not just asking me questions (though I will need to answer questions to engage in retrieval practice on whatever new information you communicate to me).

This should have been a fill-in the blank question, not a True/False.

Please be sure to provide any spaced review you think that I’m due for. But only do spaced review on information you’ve previously asked me to solve problems about.

Also, I don’t really care about any subfield of biology in particular. Don’t center this lesson around anything just because you think I find it intrinsically interesting (I don’t). I just want to learn the core biology as quickly and efficiently as possible. That is literally all I care about. Don’t try to tailor the learning path to what you think I’m interested in, unless I ask you to do that in the future. I just want to get up to speed as quickly as possible in the most efficient way. My only concerns are learning foundational biology rigorously and efficiently.

Just do whatever you think is the fastest way to hammer this information into my head. If you think going over the broader context is going to be worth the time, then okay, but if you’re just trying to be gentle with me and it’s not actually worth the opportunity cost, then let’s just jump directly into it. You’re the expert here.

No no no you’re trying to do too much at once. You’re giving me reading about a handful of different things and then asking me questions about all of them. Just take me through one step at a time. I DO NOT want you to emulate a textbook structure where you have me read a chapter and then do a bunch of problems afterwards. I want the reading to be broken up into coherent chunks and interspersed with problems. NOT 5 minutes of reading and then 5 minutes of problems. More like 1 minute of reading and then 1 minute of problems, repeated 5 times. Keep the cycles as tight as possible.

Also, can you just ask me one question at a time instead of grouping them into 3?

Thanks, but you don’t have to repeat my answer to me when I get it right. In general you’re kind of verbose, kind of like you’re trying to make me feel good, which I appreciate, but… I just don’t need it. I don’t really want to read anything unless it’s written for the purpose of hammering information into my head. Just be as concise and to-the-point as possible. You can be like a no-nonsense drill sergeant with me: just tell me to do stuff, if I do it well then just say ‘Correct’ and move on, don’t repeat my answer back to me, don’t tell me I’m doing great work (again I appreciate the sentiment but at the end of the day it’s just more words that I have to read that are not actually hammering information into my head).

This is a bad question because I’m tempted to just transcribe the sentence above. You need to force me to pull the information out of my head, not just transcribe.

I think the problem is you just haven’t provided me with enough information, so the only question you’re able to create based on that information is trivial. I don’t want you to provide too much information, but at the same time, you need to provide enough information to generate non-trivial questions where I actually have to pull information out of my head. I don’t want to be able to guess these questions too easily based on context clues without going through the proper thought process.

You’re getting better but this still isn’t a great question because I could just answer it by copy/pasting from what you’ve written. As a general rule of thumb, you need to ask me a question where I can’t just copy/paste.

I need exactly the right balance of just enough information, not too little, not too much, and the question needs to be straightforward based on reading the information but I have to process the information at least decently well in order to answer it.

Man this is good but it’s just too much information at once. My eyes are glazing over. I want to get through all that but please break it up into maybe 2 or 3 smaller chunks of information where you ask me a question about each one to make sure I’ve properly absorbed it.”*

Conclusions

I should point out that this is kind of a “magic demo” where it might look cool/promising but there’s a subtle trick that I’m using to make the situation WAY easier in my particular use-case than it is in general reality.

The trick, the secret sauce, is that I’m continually leveraging my own expertise on efficient learning. I’m continually prompting nudges and recalibrations to keep the chat on the rails. The moment I start behaving more like a typical student, or even a serious student who is not an expert on efficient learning, things go completely off the rails.

It’s not always instantaneous. But it’s like there’s a compounding misalignment that, without continual nudges and recalibrations, eventually reaches a critical level and drives you off the road into a ditch.

That’s really the hardest part of building an effective learning system – keeping students on the road. Most people do not have a good enough understanding of what effective training entails to oversee/manage the process themself. Left to their own devices they will typically derail the process if they’re given enough agency to do so. Even if they don’t mean to.

Overall my conclusion is that

- IF you could learn successfully, long-term, by self-studying textbooks on your own, and the only thing keeping you from learning a new subject is a slight lack of time,

- THEN you can probably use LLM prompting to speed up that process a bit, which can help you pull the trigger on learning some stuff you previously didn’t have time for.

BUT the “IF” here applies to a miniscule portion of people. The vast, vast majority of people are going to need a full-fledged learning system.

And even for that miniscule portion of people for whom the “IF” applies… whatever the efficiency gain of LLM prompting over standard textbooks, there’s an even bigger efficiency gain of full-fledged learning system over LLM prompting.

Want to get notified about new posts? Join the mailing list and follow on X/Twitter.